Role: Design Lead & PM

Scope: Zero-to-one internal AI product. Shipped MVP in 4 weeks. Scaled to 500+ users in 12 weeks through continuous production releases. Flint predated the current generation of no-code AI builders. At the time, there was no established playbook for how these tools should work or who they were for.

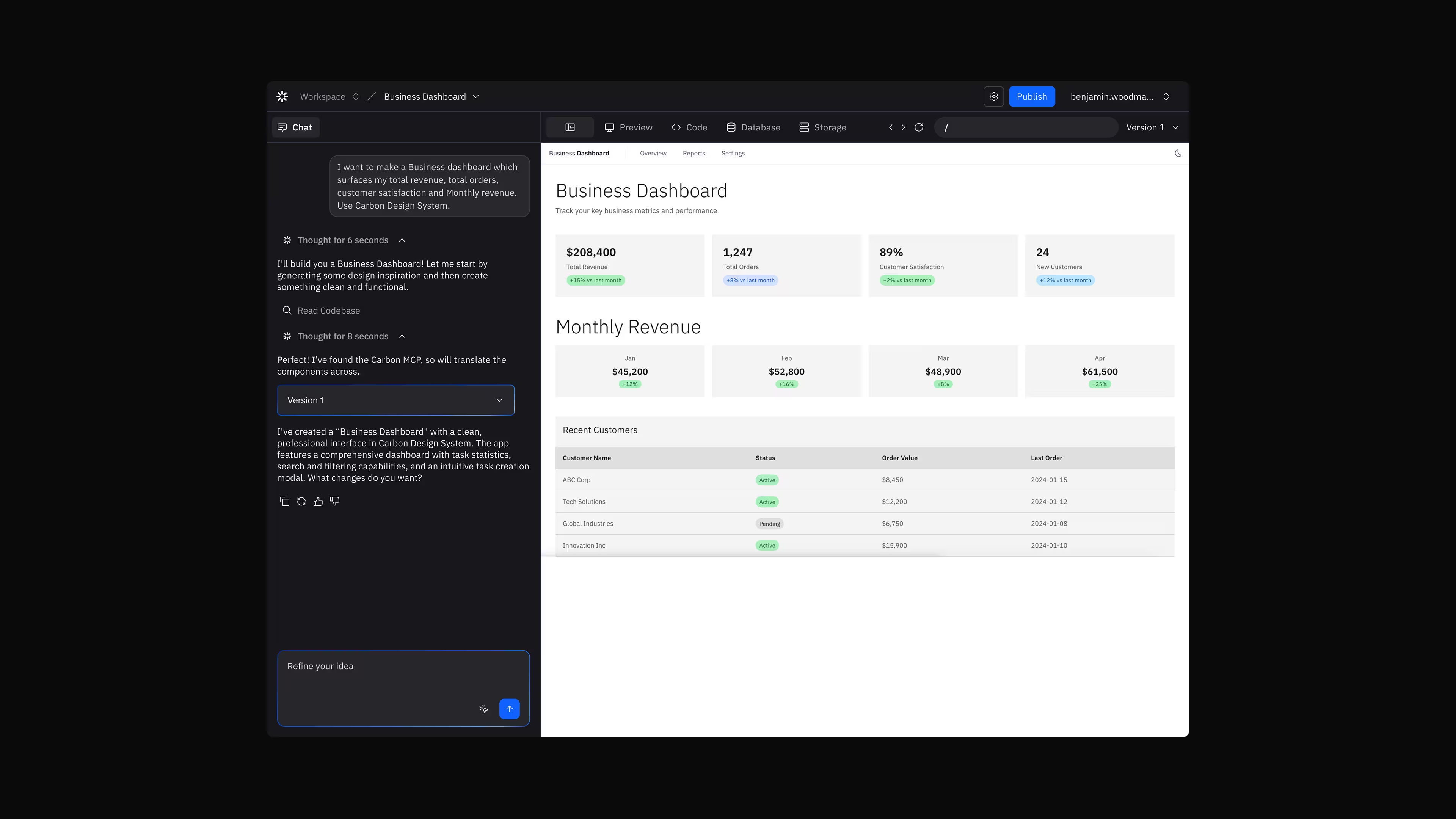

Flint was an internal natural language-to-application builder, similar to tools like v0 or Lovable, designed to allow non-technical IBM teams to create functional mini-apps from text prompts.

I led product design and product strategy from inception through scale, shipping an MVP in four weeks and growing adoption to 500+ internal users within 12 weeks. I worked directly in production code to prototype new interaction patterns and ran weekly user testing to inform rapid iteration. We collaborated with the Carbon MCP team to keep outputs aligned with IBM's design system and brand standards.

Problem

Non-technical teams across IBM wanted to prototype internal tools to improve workflows, but building even simple applications required engineering support. Existing no-code tools struggled to produce outputs aligned with IBM’s Carbon design system and enterprise standards, which reduced trust and limited real adoption.

What I designed

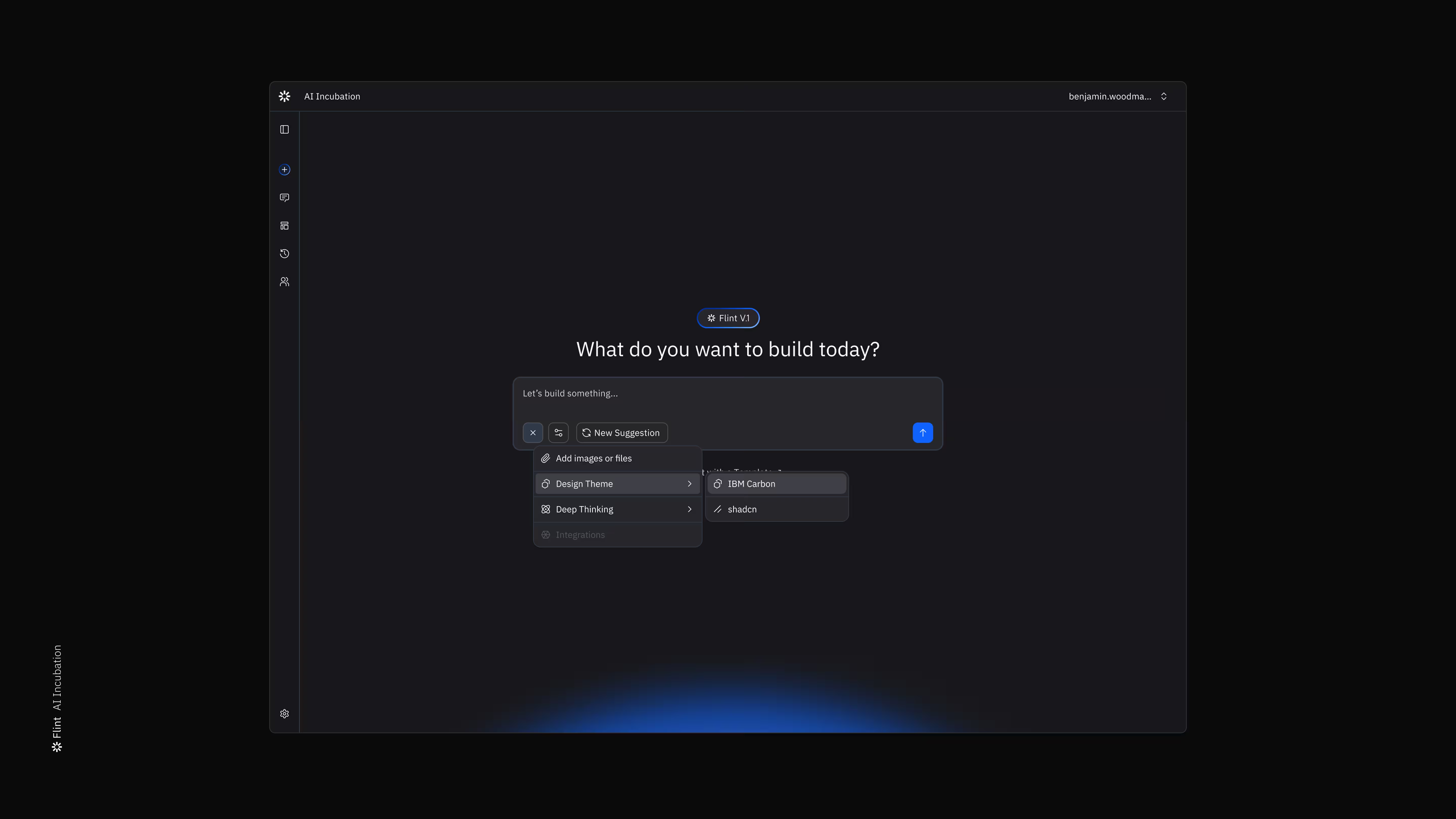

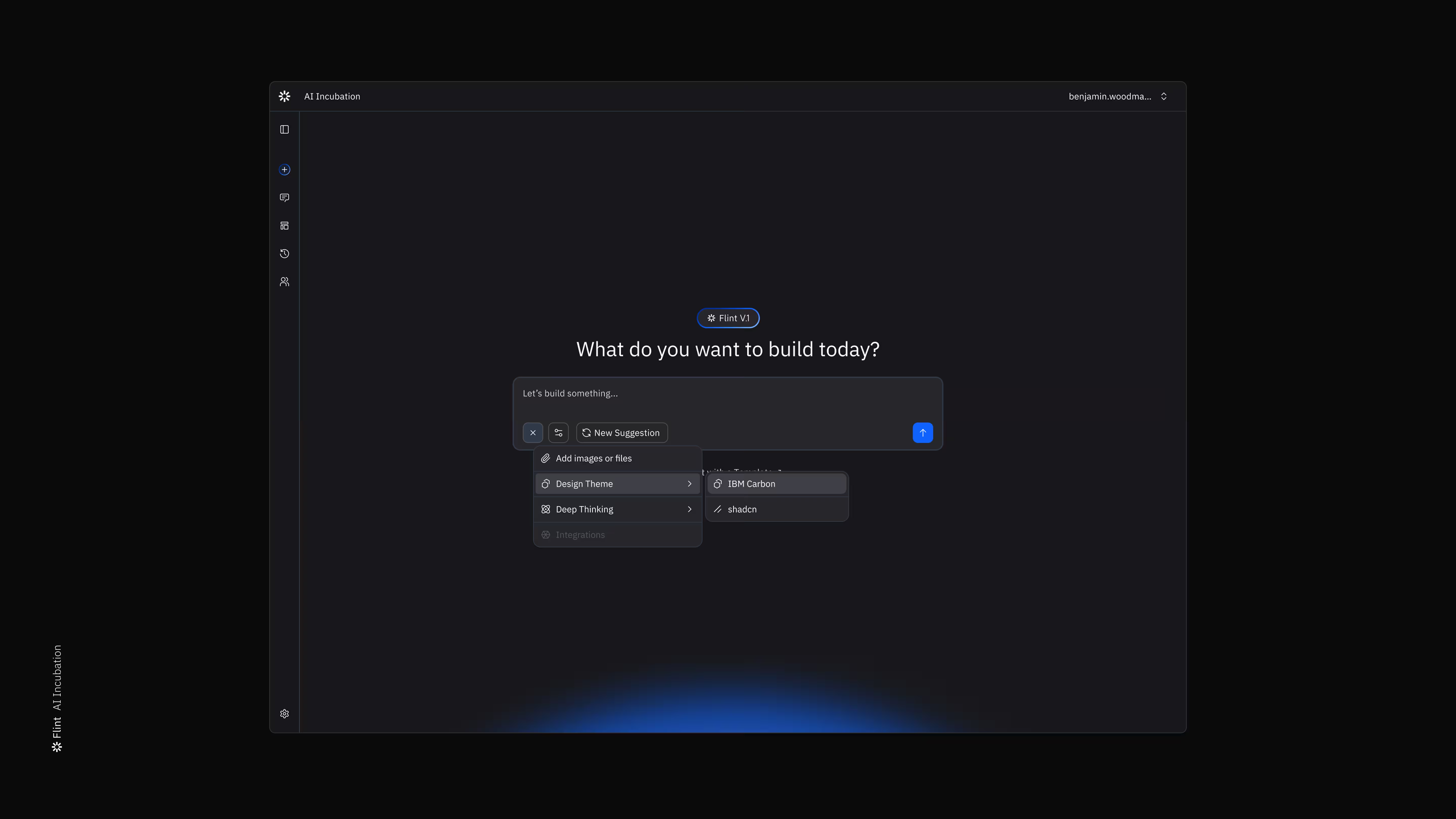

Flint — a natural-language → application builder that generates functional mini-apps from prompts while enforcing Carbon design system constraints. I led product design and strategy from 0→1, prototyping interaction patterns directly in production code and running weekly user testing to iterate rapidly.

Key insight

Users valued momentum over model sophistication.

Fast, editable drafts created trust and engagement, while slower “higher-quality” generations broke creative flow. Designing for rapid iteration and visible system feedback proved more important than maximising model reasoning depth.

Impact

→ MVP shipped in 4 weeks

→ 500+ internal users within 12 weeks

→ Average 5–10 apps generated per user

→ Established validation patterns for LLM-generated UI aligned to enterprise design systems

Non-technical teams across IBM wanted to prototype internal tools to improve workflows, but building even simple applications required engineering support. Existing no-code tools struggled to produce outputs aligned with IBM's Carbon design system and enterprise standards, which reduced trust and limited real adoption.

This created a core product tension: build for engineers who want production-ready code, or build for everyone else who just wants a fast draft? Our first instinct was to optimise for power and polish. That was wrong.

We shipped in four weeks. It broke immediately in predictable but instructive ways.

→ Outputs were dense and hard to parse.

→ Generated UIs frequently missed user intent.

→ Latency of roughly two minutes reduced perceived responsiveness.

→ Non-technical users did not understand why they would download code. Apps did not persist reliably across sessions.

We found that users valued momentum and iteration more than polish. Fast, editable drafts created trust and engagement, while slower 'higher-quality' generations broke creative flow. Designing for rapid iteration and visible system feedback proved more important than maximising model reasoning depth.

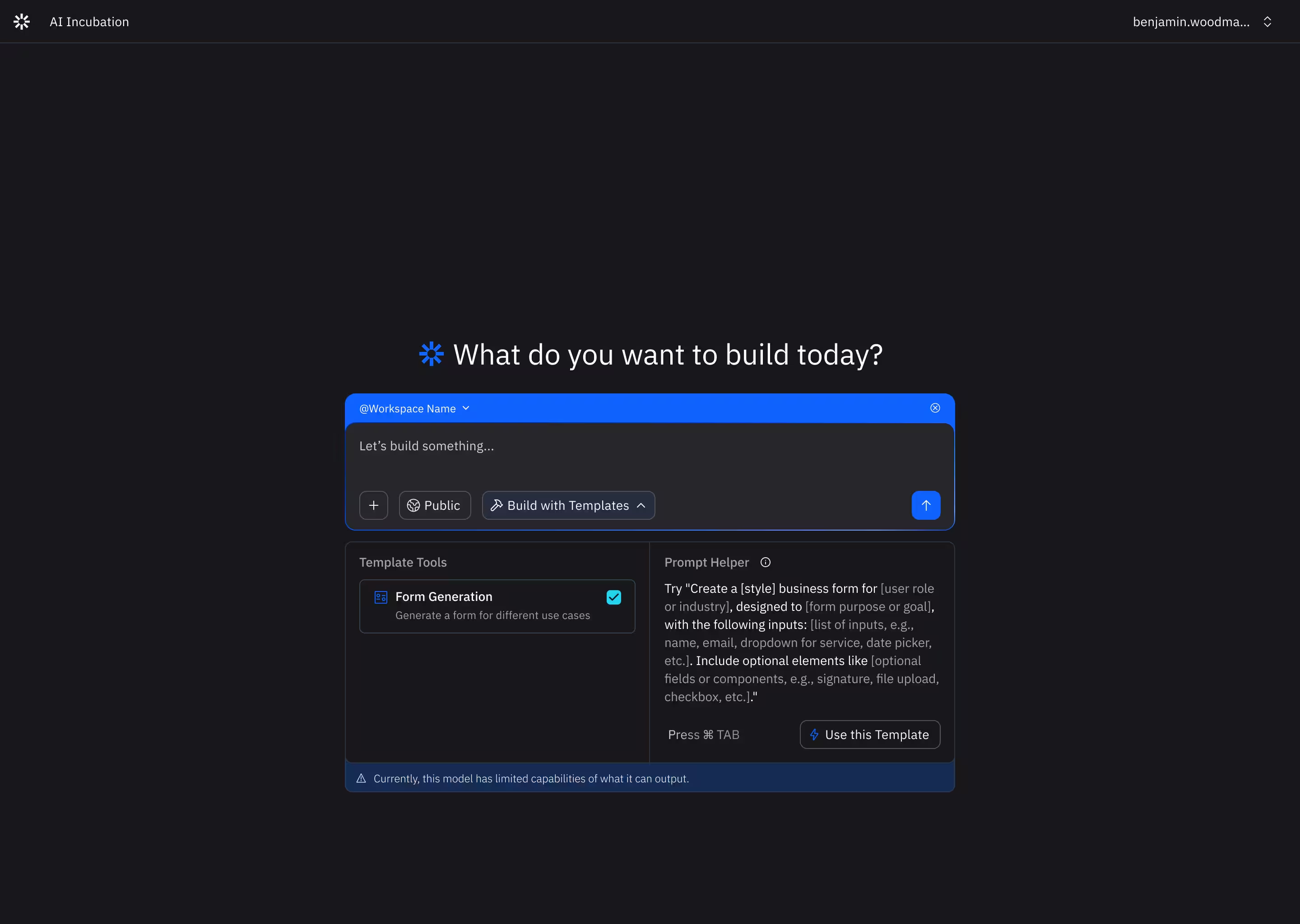

I assumed non-technical users needed structured guidance, so I built a Prompt Enhancer. Testing showed the opposite: most preferred writing rough concepts in their own words, some experienced a blank-screen effect, and others drafted in external models and pasted results in. The structured enhancer added friction. We removed it and expanded the input instead.

We introduced a 'Deep Thinking' mode that gave the model more time. It improved code quality, but not user experience. Users preferred a fast first draft they could refine. Longer generations broke flow. More reasoning often overfit to existing components without improving interaction quality. We optimised for speed by default and made deeper generation optional.

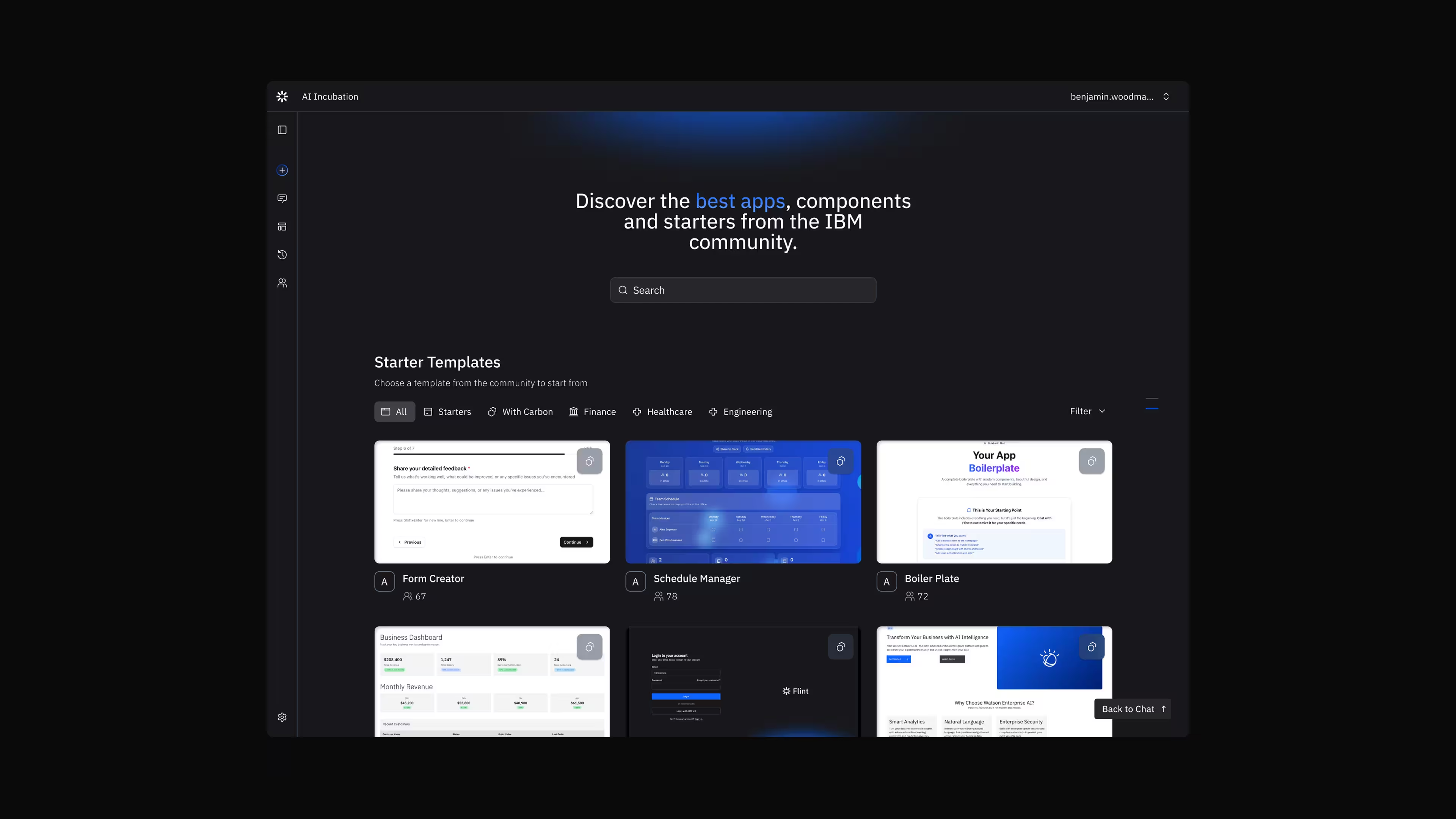

I assumed shared templates would accelerate adoption. In testing, users preferred building bespoke apps, but found some value in using repeat templates to understand the structure and prompts. Templates were useful for reference, not reuse. Ownership mattered more than remixing. We deprioritised template-sharing and focused on fast individual iteration.

We filled a 40+ user waitlist in three days. Within twelve weeks, Flint had over 500 internal users generating an average of 5 to 10 apps each. Usage was sustained over 12 weeks of daily shipping. Carbon MCP integration ensured all generated components aligned with IBM standards. Flint was internal, but outputs were frequently shown in client settings. Structural errors or brand misalignment reduced confidence in the tool.

Flint was initially positioned as a production code generator. That framing created anxiety: users questioned code quality, deployment expectations, and engineering standards. A research interview clarified the issue.

That reframed two things at once. Carbon alignment became a product requirement. We integrated directly with Carbon MCP, IBM Plex Sans, and approved colour tokens to ensure outputs matched IBM standards by default. And we repositioned Flint as a rapid internal prototyping tool, not a production system. That shift reduced pressure around code quality and increased willingness to experiment.

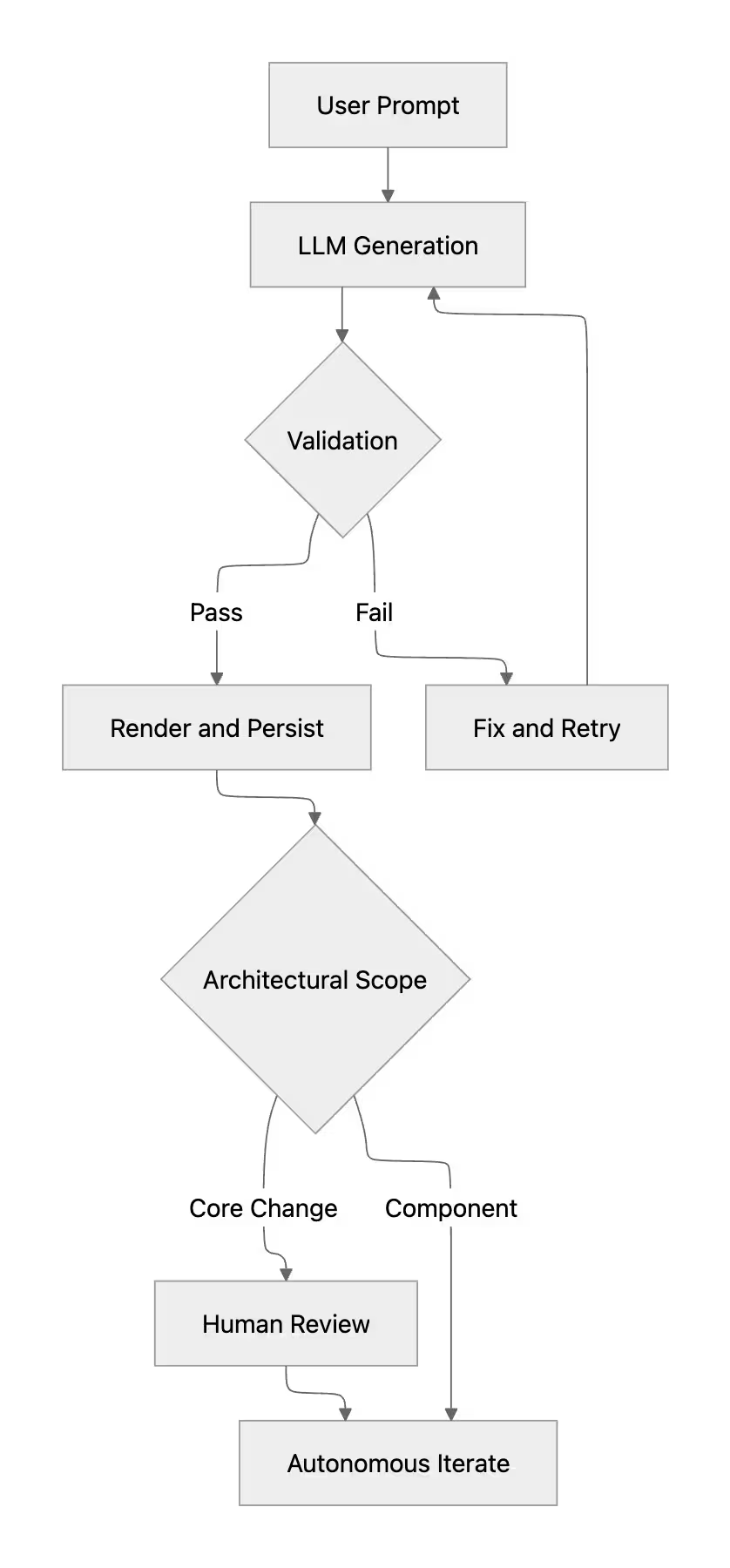

To prevent drift from IBM design standards, we introduced code-level evaluation using Carbon MCP and open-source Carbon references. Generated output was programmatically compared against:

Outputs failing validation were either blocked or passed back into the model with structured correction prompts. This created a lightweight evaluation loop: Generate → Validate → Correct → Regenerate.

We experimented with feeding parts of Flint's own codebase back into the model to generate refactors and small features. That was the moment the project changed scope. We had built a tool for non-technical users to create apps from prompts. What we had not anticipated was that the same loop applied to the product itself.

We tried narrowing context to specific files and summarising architectural state before regeneration. It reduced surface-level errors, but architectural drift remained without manual review. It led directly to the question that shaped Relay: if generative systems can operate across your product surface, what does structured supervision actually require?

Flint started as a code generation tool. It became an exercise in defining where generative systems are dependable and where they are not. Repositioning it as a prototyping tool unlocked adoption. Carbon alignment unlocked trust. Feeding it back into itself clarified its limits.

Recursive generation did not fail loudly. Small inconsistencies compounded over time. Stability depended less on model capability and more on where autonomy was allowed. Flint was reliable at scoped component synthesis, but it was unreliable at multi-session architectural reasoning. Defining that envelope mattered more than adding features.

Scaling to 500+ users came from aligning with how people work: fast drafts, visible errors, tight iteration loops. Not from increasing model complexity.