Relay - Designing Supervision Interfaces for AI Agents, 2026

Confidentiality Note

This case study describes a design exploration conducted within AI Incubation. Certain details have been generalised or omitted to respect confidentiality.

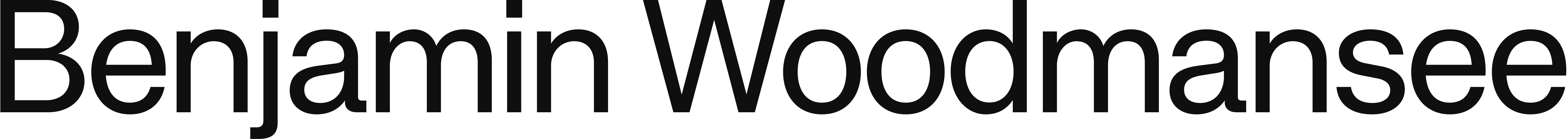

Role: Design Lead & PM

Scope: Led research, product strategy, and supervision model design for an agentic workspace operating across enterprise systems.

Owned:

→ Problem framing and hypothesis definition

→ Research design and synthesis

→ Supervision architecture for agent tasks

→ End-to-end prototyping

→ Executive alignment and roadmap decisions

→ Evaluation framework for agent reliability and supervision quality

Project Overview

This case study describes a confidential design exploration for supervising AI agents that operate across fragmented enterprise systems. The core premise: as agents become capable of taking action across tools and data, the differentiator shifts from 'what the model can do' to 'what the user can reliably understand, control, and recover from.'

Within AI Incubation, I led research and product design for an early prototype that combined an inspectable agent with a modular workspace canvas. The system aimed to make agent activity legible and safe enough for operational use — through explicit state, provenance, and intervention points and without adding setup burden for time-constrained users.

Context

The AI product landscape is moving from conversational assistants toward systems that can plan, execute, and coordinate multi-step work. In enterprise environments, that work rarely happens in one place: context is distributed across tools and data sources—often with inconsistent data quality and constantly changing state.

This creates a new interaction design challenge: autonomy can reduce manual effort, but it can also increase cognitive load when users must monitor invisible background work, reconcile conflicting sources, or debug unexpected actions. In practice, agentic systems fail not only on capability — but on supervision: users need ways to verify, steer, and safely interrupt behavior without becoming full-time managers of automation.

The Problem

The exploration focused on one central question:

How might we design a supervision experience for multiple AI agents executing long-running work across disconnected enterprise systems — so users can delegate confidently while maintaining visibility, control, and trust?

In early user conversations (across technical and non-technical operational roles), a consistent pattern emerged: the pain wasn’t a lack of data or dashboards. It was the overhead of coordinating work across tools—tool switching, stitching context, and maintaining continuity from one action to the next. Time, not information was the scarce resource.

The design constraints were sharp:

→ Agents could only help if users could understand what they were doing and why.

→ Any solution that increased setup complexity would fail adoption.

→ Without clear intervention and recovery, autonomy creates risk and hesitation instead of leverage.

Design Opportunity

The opportunity wasn’t to build “smarter analytics” or a more capable chat interface. It was to introduce new interaction paradigms for supervising delegated work — interfaces that treat agent actions as first-class, inspectable objects.

I framed the design space as:

→ Agents could only help if users could understand what they were doing and why. Turning invisible background automation into something observable and accountable.

→ Giving users control without forcing them into micromanagement. Making uncertainty explicit (freshness, source reliability, execution confidence).

→ Designing for recovery as a default, not an edge case.

Exploration

I started by mapping end-to-end workflows and identifying where coordination broke down across systems. From research synthesis, I formalised a model to structure agent work:

Tasks → Intents → Actions

→ Task: the user-recognisable unit of work

→ Intent: what success means and what constraints apply

→ Actions: concrete steps executed across tools and data sources

This structure wasn’t only conceptual — it became a design tool for defining what should be automated, what should be confirmed, and where escalation is required.

To stress-test the model, I ran adversarial prompt testing using ambiguous and conflicting requests, stale context, and scenarios that should trigger escalation rather than execution. These tests helped define escalation rules: when the system should ask, confirm, or act—and what evidence it must surface before doing so.

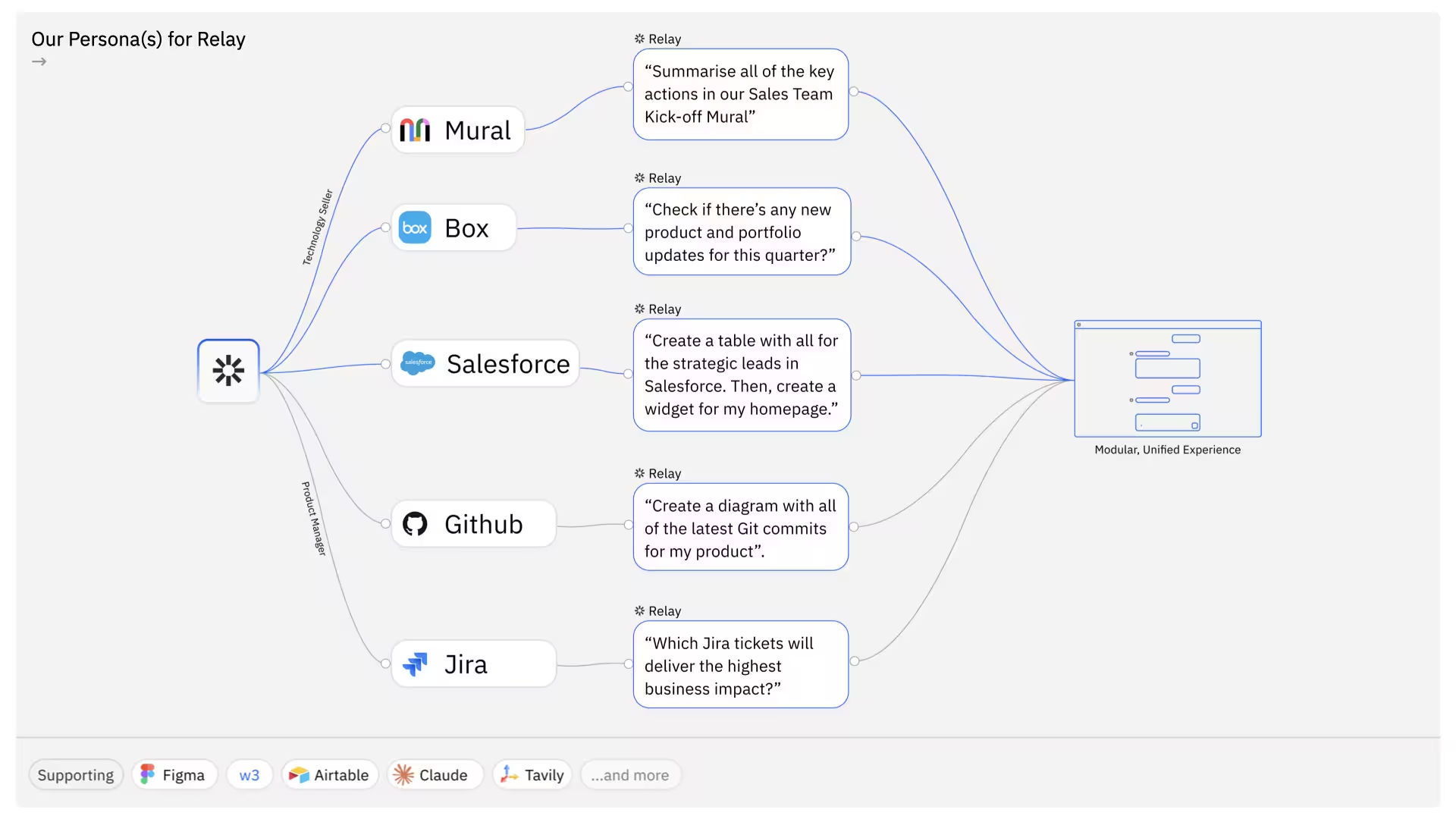

In parallel, I explored three high-level supervision models:

Modular: a workspace composed of widgets representing context, tasks, and monitors

Unified: a single end-to-end workflow experience for completing work in one place

Transformative: cross-system analysis and synthesis as a primary value driver

User testing and stakeholder review pushed the direction toward a system that was modular in structure, but guided in configuration — reducing blank-canvas burden while retaining flexibility.

Based on our research findings, we evolved our modular dashboard concept but three new design challenges emerged. These needed to be reshaped after testing and evaluation.

What the Research Changed?

1. “Spaces” for Separate Accounts

Hypothesis: sellers benefit from switchable account surfaces.

Finding: context-switching increased cognitive load and felt like managing browser tabs.

Decision: move to a sidebar holding ‘projects’ or ‘space’ to manage specific content.

2. Parallel Agent Tabs

Hypothesis: sellers benefit from switchable account surfaces.

Finding: context-switching increased cognitive load and felt like managing browser tabs.

Decision: move to a sidebar holding ‘projects’ or ‘space’ to manage specific content.

3. Manual Integration Onboarding (Packs &/skills)

Hypothesis: Configuring systems, widgets and platform integrations is challenging.

Finding: Setup friction stalled activation, especially for non-technical users.

Decision: introduce pre-configured Packs & Skills tailored by role. Auto-generate widgets on behalf of users. This was a significant change in the structure to the supervision model.

Key Design Insights

1) Autonomy without observability increases cognitive load

Even when output quality was high, users hesitated if they couldn’t see progress, dependencies, or what the agent was basing decisions on. Invisible work feels risky.

2) The failure mode isn’t always wrong — it’s outdated

Plausible results built from stale context were more trust-damaging than obvious errors, because users couldn’t detect them early. Freshness and provenance must be designed, not assumed.

3) Long-running tasks require a legible state model

Users struggled to supervise parallel threads when state was implicit. Consolidating into a single Tasks view with clear transitions reduced confusion and improved confidence.

Future Directions

This exploration points toward an emerging category: coordination layers for AI work. As models become more capable, product differentiation shifts to supervision quality—interfaces that make agent behavior:

→ Observable: users can see what’s happening and why

→ Interruptible: users can intervene without breaking the system

→ Accountable: actions have traceable evidence and history

→ Recoverable: the system supports safe rollback and clear next steps

Longer-term, I believe agent experiences will require a new interaction contract between human and system — where uncertainty, recency, and delegation boundaries are explicit. Designing that contract is as important as designing any single feature.